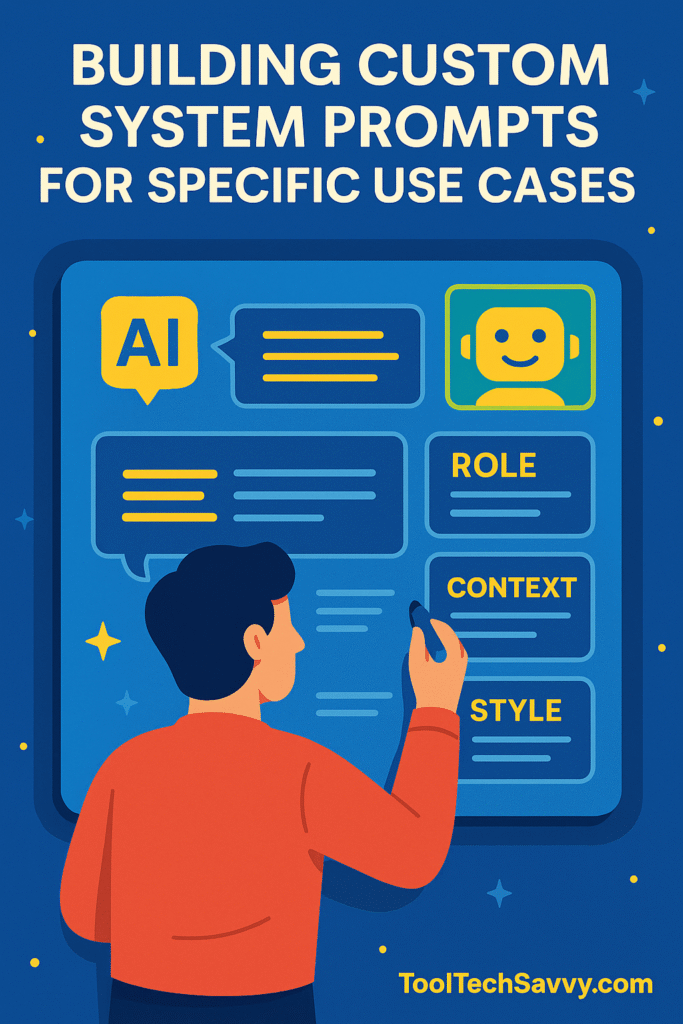

From Generic to Expert: How to Build Custom System Prompts for Precision AI

Most people focus on the user prompts — the instructions they type into ChatGPT, Claude, or Gemini.But behind every great AI app or agent is something even more powerful: a well-crafted system prompt. System prompts are the invisible guideposts that shape how your AI “thinks,” responds, and behaves.Whether you’re building a personal writing assistant (see […]

From Generic to Expert: How to Build Custom System Prompts for Precision AI Read More »