Scaling AI Efficiently: The Ultimate Guide to Production Cost Savings

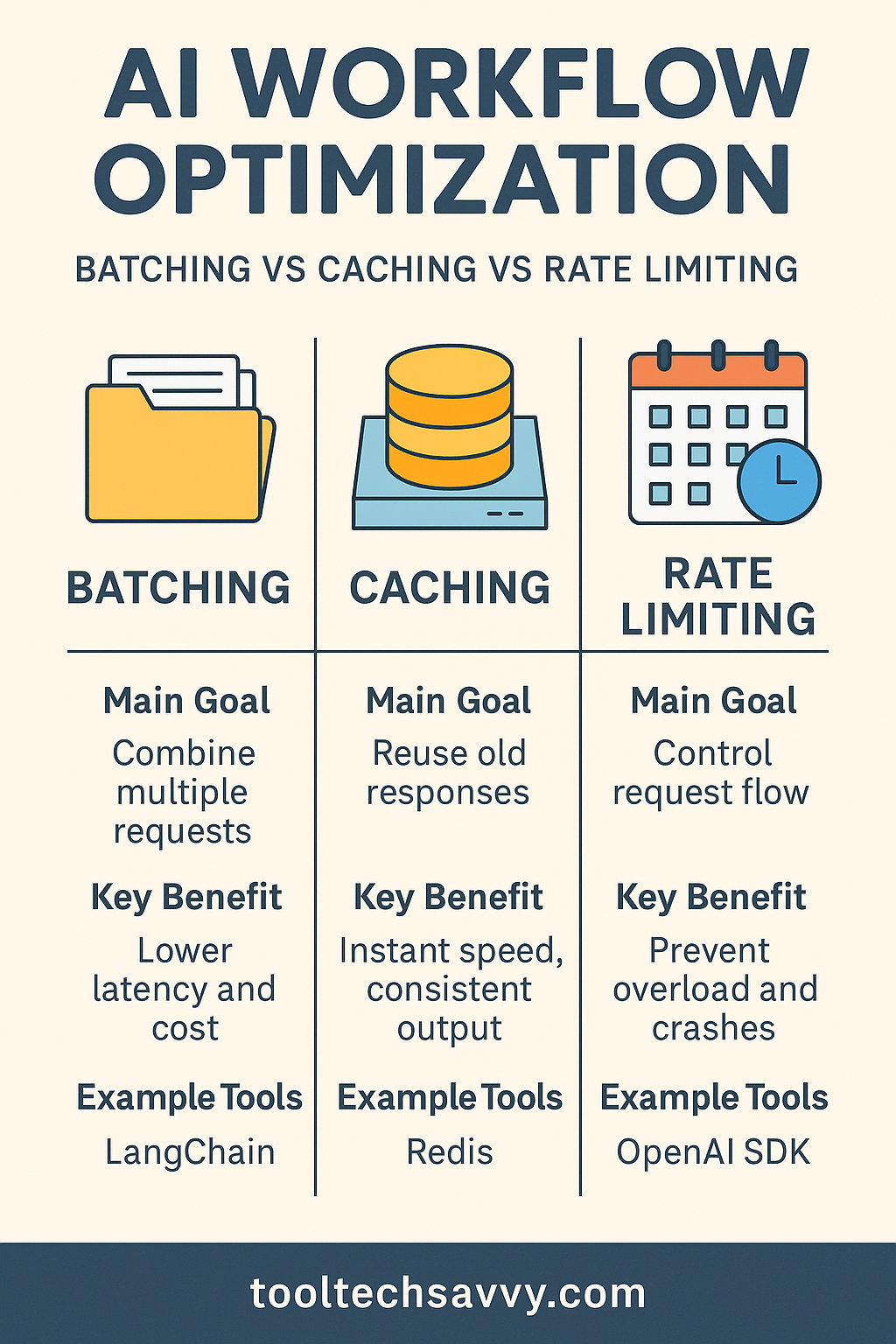

AI workloads aren’t like traditional applications. They depend on compute-heavy models, data pipelines, and APIs that bill per request. Without clear oversight, you could easily overspend on inference calls, storage, or model fine-tuning. Think of cost control as part of AI architecture design. In fact, understanding the basics of model efficiency can help you build […]

Scaling AI Efficiently: The Ultimate Guide to Production Cost Savings Read More »