Neural networks are behind almost every AI breakthrough you’ve heard of in the past decade — from ChatGPT to image recognition, self-driving cars to real-time translation. But despite the hype, the core idea is surprisingly intuitive.

In this tutorial, we’ll start from scratch. No calculus, no code (yet). By the end, you’ll understand what a neural network is, where the idea came from, and how it learns — using nothing more than a good analogy and a clear diagram.

| 📌 What You’ll Learn What a neural network is and why it matters. How the biological brain inspired the artificial neuron. What neurons, synapses, and weights actually mean in AI. How information flows through a network — with a diagram. What ‘training’ a neural network means in plain English |

1. The Big Idea: Machines That Learn

Traditional software follows rules. A developer writes: “If the temperature is above 30°C, show a sun icon.” The computer obeys exactly. This works beautifully for well-defined problems.

But what about tasks like recognising a face in a photo? Or translating spoken Mandarin to English in real time? The rules are too complex — there are too many edge cases, too much variation. Nobody can write them all by hand.

Neural networks take a different approach: instead of following rules, they learn from examples. Show the system thousands of cat photos and thousands of dog photos, and it figures out the distinguishing patterns on its own. The more examples it sees, the better it gets.

This is the fundamental shift that neural networks introduce: from programming explicit logic to learning from data.

| 💡 Key Idea Traditional software: rules written by humans. Neural networks: patterns learned from data. |

2. Biological Inspiration: The Human Brain

The human brain contains roughly 86 billion neurons — specialised cells that form the substrate of thought, memory, perception, and action. These neurons communicate not in isolation, but in vast, interconnected networks.

2.1 How a Real Neuron Works

At the most basic level, a biological neuron does something deceptively simple: it receives signals, processes them, and — if the combined signal is strong enough — fires its own signal outward.

Here’s the anatomy of that process:

- Dendrites — The receiving antennae. Each neuron has many branching dendrites that pick up electrical signals from neighbouring neurons.

- Cell body (soma) — The processing centre. It sums up all the incoming signals from the dendrites.

- Axon hillock — The decision point. If the combined signal exceeds a certain threshold, the neuron ‘fires’.

- Axon — The output wire. Carries the electrical impulse away from the cell body.

- Synapses — The junctions between neurons. Each synapse has a variable ‘strength’ — the more often a connection is used, the stronger it becomes. This is the basis of memory and learning.

2.2 Synaptic Strength = Learning

The famous phrase in neuroscience is: “Neurons that fire together, wire together.” When two neurons frequently activate in sequence, the synapse between them strengthens. Over time, these patterns of reinforcement build memories, habits, and skills.

This biological mechanism — variable connection strength — is the direct inspiration for how artificial neural networks learn. The AI equivalent of synaptic strength is called a weight.

| 🧠 Analogy Think of a neuron like a democratic vote counter at a committee meeting. It receives votes (signals) from many colleagues (other neurons). Each colleague has different influence (synaptic strength). The counter tallies them up — and only raises its hand (fires) if the weighted total exceeds its personal threshold. |

3. From Biology to Mathematics: The Artificial Neuron

In 1943, neurophysiologist Warren McCulloch and mathematician Walter Pitts published a landmark paper proposing a mathematical model of a neuron. Their artificial neuron was a radical simplification — but it captured the essential computation.

Here’s how an artificial neuron works, step by step:

- Receive inputs — Each neuron gets one or more numerical inputs (e.g. pixel brightness values, temperature readings, word embeddings).

- Apply weights — Each input is multiplied by a weight. A high weight amplifies that input’s importance; a near-zero weight diminishes it.

- Sum everything up — All the weighted inputs are added together, often with an additional term called a bias (which shifts the result).

- Apply an activation function — This is the ‘axon hillock’ equivalent. It takes the sum and decides what value to output — squashing it into a useful range, or switching it on/off.

3.1 The Biological–Artificial Mapping

The table below shows how each part of a real neuron maps directly to a component of an artificial one:

| Biological Neuron | Artificial Neuron | Role |

| Dendrites | Input connections (x₁, x₂, x₃) | Receive signals |

| Synapse strength | Weights (w₁, w₂, w₃) | Amplify or dampen signals |

| Cell body (soma) | Summation function (Σwx) | Accumulate signal |

| Axon hillock | Activation function | Decide: fire or not? |

| Axon / output | Output value | Pass result forward |

| Neural plasticity | Weight updates (training) | Learning over time |

4. Architecture Diagram: How a Network Is Structured

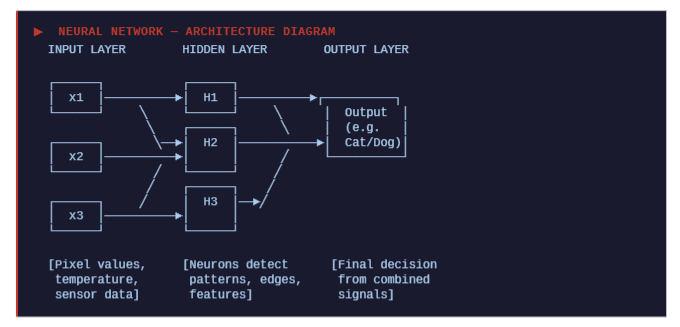

A single artificial neuron is useful, but the real power emerges when you connect many of them in layers. This is where the term ‘neural network’ earns its name. The diagram below shows a classic feedforward neural network:

4.1 Understanding the Layers

| INPUT LAYER This is where raw data enters the network. Each input neuron holds one feature — for example, the brightness of a single pixel, a temperature reading, or a word’s numeric representation. No computation happens here; it’s purely a data entry point. |

| HIDDEN LAYER(S) This is where the magic happens. Each neuron in a hidden layer receives signals from every neuron in the previous layer, applies its weights, sums them, and passes the result through an activation function. In early networks, one hidden layer was common. Modern deep learning models have dozens or hundreds — hence the term ‘deep’ neural network. Each successive layer learns increasingly abstract features: early layers might detect edges in an image, later layers recognise shapes, and final layers identify complete objects. |

| OUTPUT LAYER This final layer produces the network’s answer. For a binary classification task (cat or dog?), there might be two output neurons — one per class. For a language model generating text, the output layer might be enormous, with one neuron per word in the vocabulary. |

5. How a Neural Network Learns

A freshly initialised neural network is useless. Its weights are set randomly, so its outputs are essentially random guesses. Learning is the process of systematically adjusting those weights until the outputs become consistently correct.

5.1 Forward Pass

Data enters at the input layer and flows forward through the network, layer by layer, until an output is produced. This is called the forward pass. The output is the network’s current prediction.

5.2 The Loss Function

After the forward pass, we compare the network’s prediction to the correct answer. The loss function quantifies how wrong the prediction was — the higher the loss, the worse the prediction. A common loss function for classification is cross-entropy loss; for regression, it’s mean squared error.

5.3 Backpropagation

Here’s where learning actually happens. Using a mathematical technique called backpropagation, the network works backwards from the output — calculating how much each weight contributed to the error, and adjusting it slightly in the direction that reduces the loss.

This adjustment is guided by an algorithm called gradient descent. Think of it as rolling a ball downhill in a landscape where altitude represents error: the ball always moves in the direction of steepest descent until it finds a valley — a low-error configuration.

| ⚡ In Practice Training a large neural network involves repeating this cycle — forward pass → compute loss → backpropagate → update weights — millions or billions of times across vast datasets. This is why AI training is so computationally intensive and why GPUs (or specialised AI chips like TPUs) are essential. |

6. Types of Neural Networks (A Quick Map)

The architecture we described — input layer, hidden layers, output layer — is the foundation. But there are many specialised variants, each suited to different kinds of data:

- Feedforward Neural Networks (FNN) — The classic architecture described in this post. Data flows in one direction only.

- Convolutional Neural Networks (CNN) — Designed for image data. They use specialised layers that scan for local patterns (edges, textures) before assembling them into a full picture.

- Recurrent Neural Networks (RNN) — Designed for sequential data like text or audio. They have connections that loop back, giving the network a form of short-term memory.

- Transformers — The architecture behind GPT, Claude, and most modern language models. They use a mechanism called self-attention to process relationships between all parts of an input simultaneously.

- Generative Adversarial Networks (GAN) — Two networks compete: one generates synthetic data, the other tries to detect fakes. The result is a generator that can produce highly realistic images, audio, or video.

7. Real-World Applications

Neural networks are not a theoretical curiosity — they are deployed at massive scale across virtually every industry:

- Healthcare: Diagnosing cancers from medical scans, predicting patient deterioration, accelerating drug discovery.

- Finance: Detecting fraudulent transactions in real time, algorithmic trading, credit risk modelling.

- Language: Machine translation, summarisation, code generation, customer service chatbots.

- Vision: Autonomous vehicles, facial recognition, product quality inspection on manufacturing lines.

- Science: AlphaFold’s revolutionary protein structure prediction — arguably the biggest single scientific advance AI has enabled to date.

8. Key Terminology — Quick Reference

| Term | Plain English Meaning |

| Neuron | A single processing unit that receives inputs, computes a weighted sum, and fires an output. |

| Weight | A number that controls how strongly one neuron influences another. Adjusted during training. |

| Bias | An extra parameter that shifts the neuron’s activation, giving it more flexibility. |

| Activation function | A mathematical function that decides how strongly a neuron fires (e.g. ReLU, sigmoid, tanh). |

| Layer | A group of neurons at the same ‘depth’ in the network: input, hidden, or output. |

| Forward pass | The process of sending data through the network from input to output to get a prediction. |

| Loss function | A measure of how wrong the network’s prediction is compared to the correct answer. |

| Backpropagation | The algorithm that calculates how much each weight contributed to the error. |

| Gradient descent | The optimisation strategy that adjusts weights step by step to reduce the loss. |

| Epoch | One complete pass through the entire training dataset. |

| Deep learning | Neural networks with many hidden layers (typically more than two). |

Conclusion

Neural networks are one of those ideas that seem intimidating from the outside but become intuitive the moment you see the biological parallel. Nature spent millions of years evolving a learning system — the brain — built on simple, interconnected units that strengthen useful connections and weaken unused ones. Artificial neural networks replicate that principle mathematically, and the results have been transformative.

You now understand:

- Where neural networks come from — the biological neuron and synapse

- How an artificial neuron works — inputs, weights, summation, activation

- How layers of neurons form a network — input → hidden → output

- How the network learns — forward pass, loss, backpropagation, gradient descent

In our next post, we’ll take these concepts into code — building a simple neural network from scratch in Python, and training it on real data. Stay tuned.

| Enjoyed this tutorial? We publish in-depth guides on AI tools, developer workflows, and cloud platforms every week. Come explore more on the blog. → Read more at tooltechsavvy.com |